R&D at Mercedes-Benz Tech Innovation

Multimodal AI Fusion, Spatial Computing, and In-Vehicle XR

Note: More information will be out once the paper is published at the target venue.

Multimodal AI Fusion for Outside-the-Vehicle Referencing (OVR) using XR Inside the Moving Vehicle

Role: XR Researcher (Master Thesis)

Organization: Mercedes-Benz Tech Innovation, Stuttgart, Germany

Keywords: Spatial Computing, Sensor Fusion, Transformers, Digital Twins, Human-Computer Interaction

TL;DR: I engineered a real-time multimodal XR pipeline fusing user gaze and speech to solve the Outside-the-Vehicle Referencing (OVR) problem. By architecting a custom Transformer-based fusion network and pioneering a synchronized moving-car data pipeline, the system achieved state-of-the-art accuracy, earning endorsement from Mercedes-Benz for future production R&D.

The Challenge: Contextual Referencing in Dynamic Environments

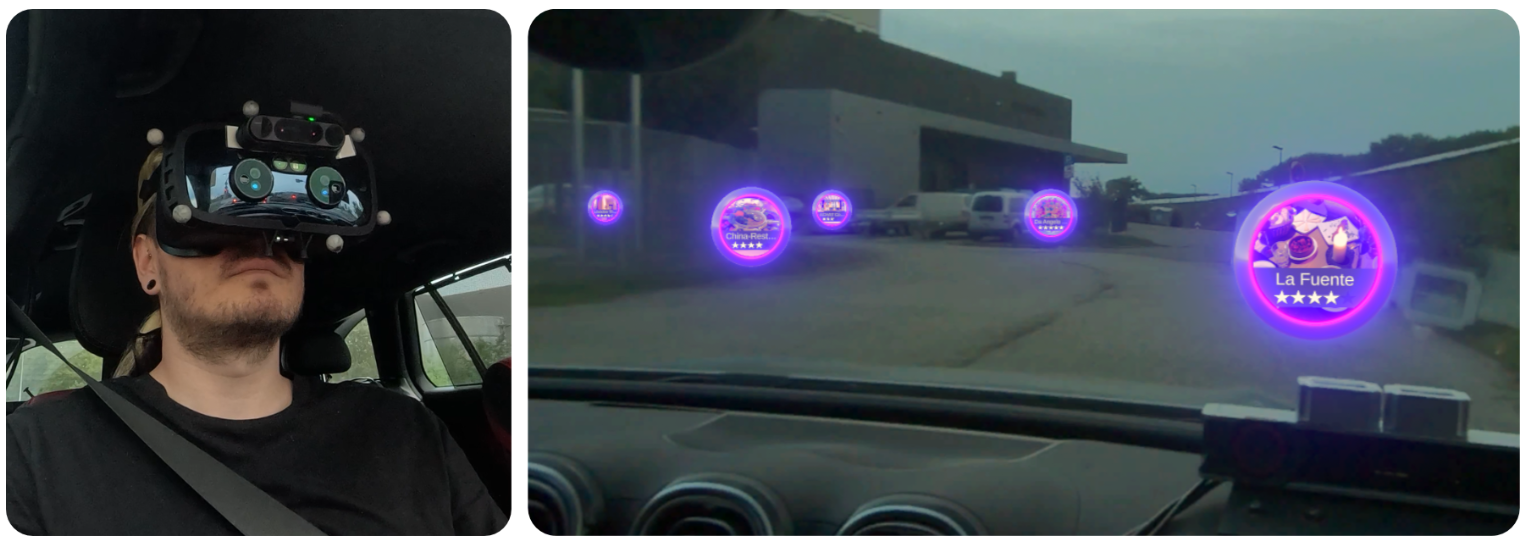

Traditional Extended Reality (XR) systems rely heavily on static environments or explicit pointing mechanisms (e.g., ray-casting via controllers). However, inside a moving vehicle, high-speed ego-motion and the cognitive load of passengers render explicit pointing unnatural.

Meanwhile, using XR headsets for passengers has proved to be effective and useful, helping passengers gain information about physical buildings acting as their points of interest (POI). However, the core issue is that there is no robust way to accurately estimate a passenger’s target POI in motion—a challenge known as Outside-the-Vehicle Referencing (OVR).

The objective was to design a frictionless, real-time spatial interaction paradigm: allowing passengers to simply look at a building out the window, ask a natural language question (e.g., “When was that church built?”), and receive an accurate, context-aware response.

Results & Impact

By exploiting the natural redundancy of human gaze and aligning it with semantic context, the final architecture represents a significant leap forward in automotive spatial computing.

- Performance: Achieved highly robust Rank-1 Accuracy and F1-Scores, outperforming existing state-of-the-art baselines by over 10% in dynamic, in-vehicle contexts.

- Industry Validation: The methodology and spatial fusion framework were validated by Mercedes-Benz management as a highly viable candidate for integration into future production R&D pipelines.

Tools & Technologies: PyTorch, Transformers, LLMs, Unity3D, Python, GNSS/INS Telemetry, Sensor Fusion.